Last Updated on February 10, 2026

The question of whether artificial intelligence can truly perform professional consulting work has moved from academic curiosity to practical urgency. Businesses, prospects, and even consulting firms themselves are experimenting with autonomous AI agents in hopes they might eventually replace or augment human consultants. But recent research from Mercor, a research and expert-marketplace startup, suggests that today’s AI agents are nowhere near ready to take over the core analytical and decision-making work that drives consulting value.

A New Way of Evaluating AI in a Professional Consulting Context

Mercor’s new benchmark, called APEX-Agents, was intentionally built to fill a gap left by traditional AI evaluations. Instead of testing isolated question–and–answer ability, APEX-Agents simulates real professional workflows – long-horizon, multi-step tasks that professionals actually face when delivering client work. The researchers involved in Mercor’s project surveyed hundreds of experts from top firms, then constructed data-rich “work environments” with documents, spreadsheets, emails, and tool interactions that an agent would need to navigate to complete meaningful tasks.

In one simulated consulting world, for instance, an AI agent might be tasked with navigating a file system filled with client reports, analyzing consumption patterns, computing penetration metrics using spreadsheets, and then drafting a concise summary of insights. These are not multiple-choice quizzes; they are complex missions that professionals expect to spend hours digesting and executing.

The Results are Out

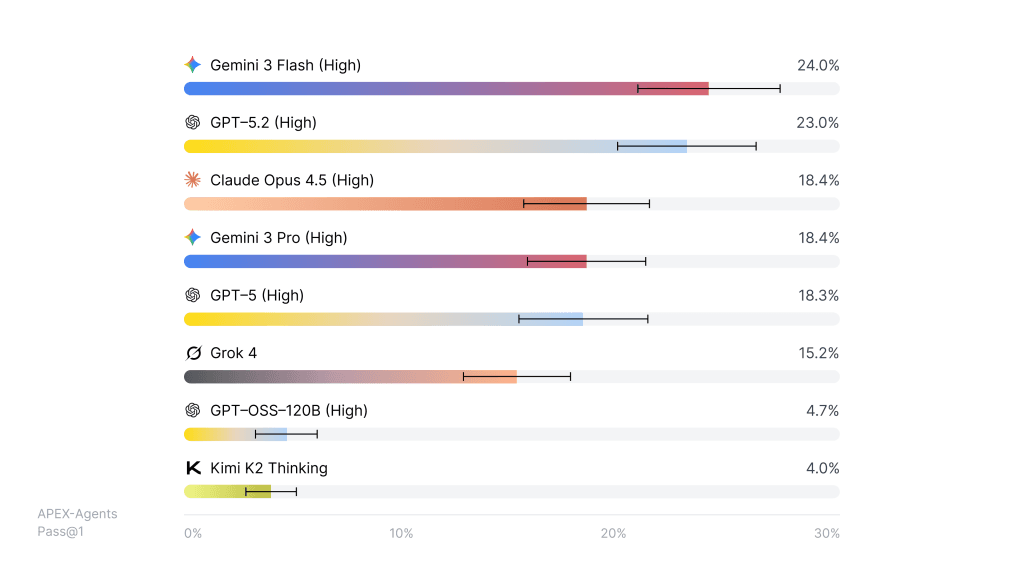

When Mercor tested a range of leading AI agents on these scenarios, the results were sobering. Even the best-performing models completed fewer than 25 percent of tasks correctly on the first try (Pass@1). According to Mercor’s Pass@1 leaderboard, models like Google Gemini 3 Flash and OpenAI GPT-5.2 performed best, but their success rates hovered near 24% and 23% respectively. Others lagged behind.

What do these numbers mean in practice?

A Pass@1 score reflects whether an agent can finish a complex task correctly on its first attempt. That is, to pass an APEX-Agents task, the output must meet all the rubric criteria defined by consulting experts. A score in the low 20s suggests that even state-of-the-art systems fail the vast majority of real professional tasks.

Mercor’s benchmark also finds that even when agents are allowed multiple tries, performance remains sub-par. With eight attempts on each task, the best agent’s success rate rises only to about 40 percent. Even with retries and retries, agents lack the reliability many business leaders assume they already have.

This is not because generative AI lacks intelligence, nor because it cannot produce formatted slides or drafts of analytical text. It is because consulting thinking is fundamentally an end-to-end process: it involves defining the right questions, prioritizing where to look for information, navigating fragmented knowledge across domains, and synthesizing coherent recommendations that hold up in an ambiguous context.

AI Models Still Fall Short

Mercor’s research highlights precisely where agents fall short. They struggle to manage ambiguity, find the relevant facts buried across multiple files and formats, and maintain context over prolonged sequences of decisions – exactly the challenges consultants face every day.

Put simply: today’s AI can assist analysis, speed up drafts, and improve routine tasks, but it does not yet replicate the core cognitive work of consulting professionals.

For StrategyCase readers, this has implications on two fronts. First, it should temper over-optimistic narratives that AI will soon make consultants obsolete. The ability to execute complex, multi-step client tasks reliably and coherently remains a distinctly human strength. Second, for candidates preparing for consulting interviews, it underscores why strong reasoning, judgment, and prioritization skills still matter far more than polished outputs alone. Agencies like Mercor intentionally modeled their tasks around real consulting workflows because that is ultimately what employers care about — not just clean text or slides, but decisions that flow from structured thinking. McKinsey has already introduced an AI interview that evaluates how candidates perform when working collaboratively with their in-house AI.

The APEX-Agents benchmark is also an open-source contribution, giving researchers and developers a high-fidelity yardstick for improving agent architectures and training methods. Mercor has released the entire dataset, evaluation infrastructure, gold outputs, and rubrics under a Creative Commons license, inviting the AI community to iterate on these tasks.

Challenges Ahead, for AI and Humans

In the broader discussion about automation and knowledge work, Mercor’s results remind us of a fundamental truth: AI today excels at parts of a job; humans still excel at the job itself. Consultants integrate information across domains, wrestle with ambiguity, make judgment calls that blend experience with data, and deliver coherence at scale. Benchmarks like APEX-Agents show that while AI will increasingly augment human capability, the leap to full autonomy in professional consulting remains a substantial challenge.

In other words, AI will change how consulting work is executed, but not necessarily replace the role of the consultant in shaping decisions.

For full details, see Mercor’s APEX-Agents summary (https://www.mercor.com/blog/introducing-apex-agents) and the underlying arXiv research paper (https://arxiv.org/abs/2601.14242), which rigorously document the benchmark design, evaluation rubrics, and model results.