Last Updated on April 16, 2026

Many candidates preparing for the McKinsey Solve Game in 2026 are asking one question:

Is there a third game in the McKinsey Solve beyond Red Rock and Sea Wolf?

Based on a high number of recent candidate reports and real Solve test experiences, the answer is yes. By April 2026, this new module has consistently been rolled out across all regions.

The McKinsey Solve third game, referred to as the Sustainable Future Lab, is a new behavioral module within the assessment that evaluates decision-making and judgment in team-based scenarios.

This additional game represents a clear shift in how McKinsey evaluates candidates, moving beyond pure analytical problem-solving. It resembles a situational judgment test embedded within the McKinsey digital assessment, expanding how candidates are evaluated.

This article (last updated: April 2026 based on latest candidate reports) summarizes the most up-to-date insights based on real candidate experiences and will be updated as new information emerges.

From what we have seen and compared to our game insights, most guides either oversimplify this module or overfit it into rigid scoring systems. Based on real candidate data, neither approach reflects how this game actually works.

McKinsey Solve Sustainable Future Lab (2026): Quick FAQ

Is there a third game in the McKinsey Solve in 2026?

Yes. All candidates we talked to in April 2026 report a third McKinsey Solve game in 2026.

Do all candidates get the third game?

Yes. Current reports indicate that the third module is fully rolled out globally.

What is the new McKinsey Solve game module?

The new McKinsey Solve module, Sustainable Future Lab, is a behavioral, decision-focused game that evaluates how candidates operate in realistic team scenarios.

Is the new McKinsey Solve game a behavioral or personality test?

It closely resembles a behavioral or personality test, similar to a situational judgment test, but embedded within the broader Solve as a decision-making simulation.

Is it harder than the other games?

It is different rather than harder. The challenge lies in judgment and decision-making in an interpersonal context, not calculations.

How is the third game scored?

There is no verified information on a specific scoring model, weighting, or predefined dimensions as McKinsey keeps this information confidential. However, based on our McKinsey background and candidate reports, we have reverse-engineered some of the important performance dimensions. Outcomes depend on the consistency and quality of your decision-making rather than selecting individually “correct” answers.

Should I prepare for it?

Yes. Understanding how to approach these ambiguous team scenarios and using test simulations to practice your decision making is important.

Does the third game matter for passing?

The third module complements the core analytical games (Red Rock and Sea Wolf) by adding a behavioral dimension. Strong overall performance across modules matters, rather than any single game determining the outcome.

Is this the return of the McKinsey Solve Ecosystem game?

No. Current evidence suggests this is not a direct return of the Ecosystem game, but a new format focused on behavioral decision-making rather than system design.

How is the McKinsey Solve evolving in 2026?

The McKinsey digital assessment is evolving to include both analytical problem-solving (Red Rock, Sea Wolf) and behavioral evaluation through this new module.

Is There a Third Game in the McKinsey Solve (2026)?

Yes.

Candidates across several regions (Europe, Middle East, United States) have reported encountering a third module alongside the traditional Red Rock and Sea Wolf games, called Sustainable Future Lab.

This is consistent with how the Solve has evolved historically. The assessment is continuously updated to maintain its effectiveness and prevent candidates from over-preparing for static formats.

Overview of the New McKinsey Solve Module

The new module differs significantly from the existing games in both format and focus.

What is the Sustainable Future Lab McKinsey module?

Instead of solving analytical optimization problems, candidates are placed into a decision-making simulation that mirrors real consulting team scenarios (although set in a research context to keep the non-business framing of the Solve). You take on the role of a team member and are asked to respond to situations that unfold over time.

The emphasis is no longer just on arriving at the correct numerical answer, but on how you make decisions, interact with others, and navigate ambiguity. In many ways, this module functions similarly to a situational judgment test, but within the broader context of the McKinsey Solve.

This represents a clear shift from pure analytical evaluation toward assessing how candidates operate in realistic team environments. Its more of a behavioral and fit assessment, similar to what you would face in a traditional McKinsey PEI interview, just more dynamic.

Why McKinsey is Introducing This Module

The addition of the new game is not random. It reflects a clear shift in how McKinsey evaluates candidates.

There are three underlying drivers:

The Solve became too trainable

Over time, Red Rock and Sea Wolf have become increasingly understood by candidates.

- repeated candidate reports

- practice simulations

- pattern recognition over time

As a result, strong performance can partly be achieved through preparation and familiarity rather than pure problem-solving ability.

McKinsey is incentivized to continuously evolve the assessment to maintain its predictive power.

The need to test real consulting behavior

Traditional Solve modules primarily assess analytical skills:

- optimization

- logical reasoning

- data interpretation

However, real consulting work goes beyond this.

Consultants operate in:

- ambiguous environments

- team-based settings

- situations with no clear “correct” answer

The new module introduces scenarios that better reflect how candidates actually behave on the job, not just how they solve structured problems.

A shift toward simulation-based evaluation

The broader trend in consulting recruiting is moving toward realistic, simulation-based assessments.

Instead of testing isolated skills, firms are increasingly testing:

- decision-making over time

- consistency in thinking

- ability to navigate trade-offs

The Sustainable Future Lab fits directly into this shift.

It moves the Solve from a static problem-solving test toward a dynamic, behavior-driven simulation of consulting work.

What This Means for Candidates

The introduction of this game signals a broader trend in the evolution of the McKinsey Solve toward more realistic, behavior-driven evaluation methods.

The McKinsey Solve is becoming more comprehensive, assessing not only analytical ability but also behavioral decision-making and team interaction.

They are evaluating how you:

- think

- decide

- operate in realistic team situations

Candidates who understand this shift early will have a clear advantage.

This means that preparation needs to evolve as well.

Focusing only on math, logic, and optimization is no longer sufficient. Candidates must also be prepared to demonstrate strong judgment, communication awareness, and the ability to operate effectively in ambiguous team settings.

What We Know vs. What We Don’t (April 2026)

Given the amount of conflicting information online, it is critical to separate verified candidate signals from speculation. The points below reflect aggregated insights and current data from recent real Solve experiences.

What we know (high confidence)

- A third module has been reported by multiple candidates in select regions

- The format is behavioral and resembles a situational judgment test (SJT)

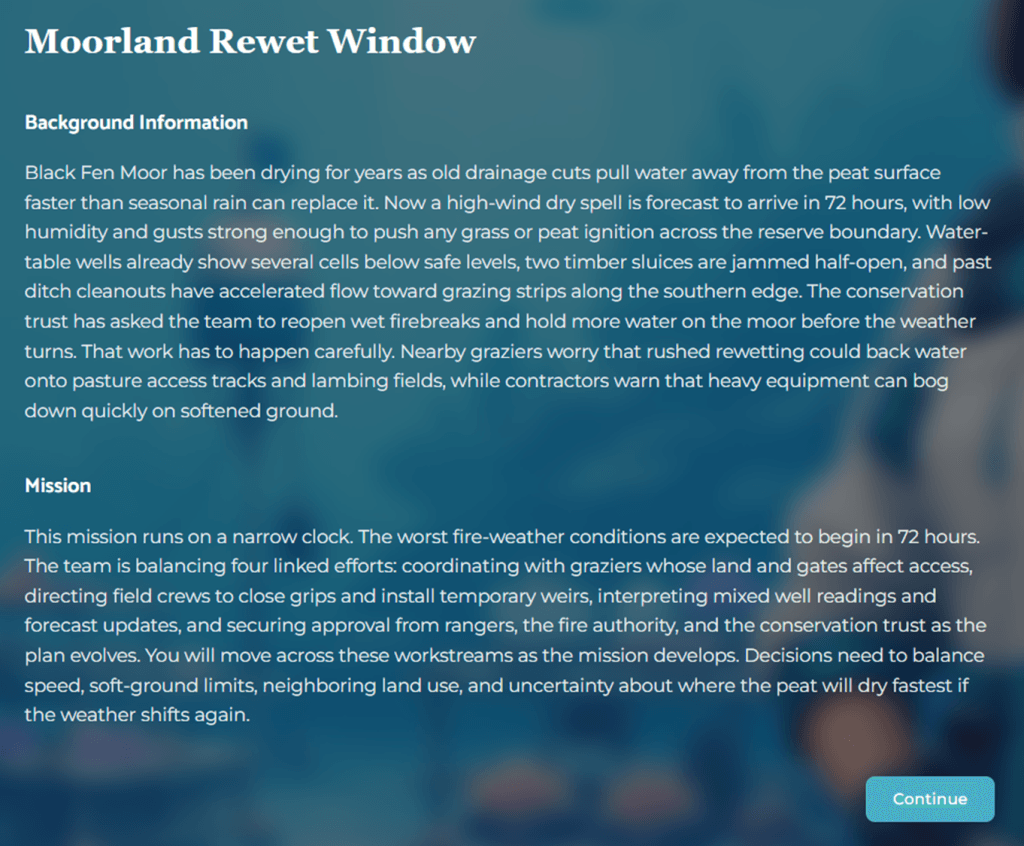

- The module typically includes 13 questions within 20 minutes

- Decisions appear to follow a branching logic, where earlier answers influence later scenarios

What is likely (medium confidence)

- The module evaluates decision-making consistency rather than isolated answers

- It tests judgment in team settings, including how candidates balance performance, collaboration, and ambiguity

What is unconfirmed (low confidence / speculation)

- Any specific scoring model, including weights, point systems, or “archetypes”

- Fixed evaluation dimensions (e.g., predefined “traits” or competency buckets)

- A standardized structure with defined phases such as priority rankings or self-assessment surveys

The key takeaway: while the existence and general direction of this module are increasingly clear, specific explanations circulating online go beyond what current evidence supports.

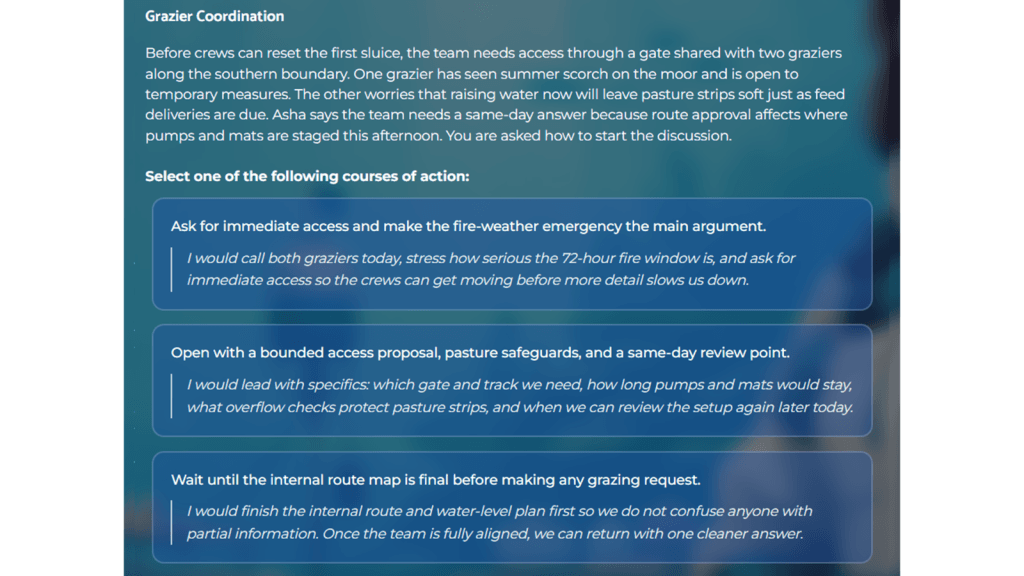

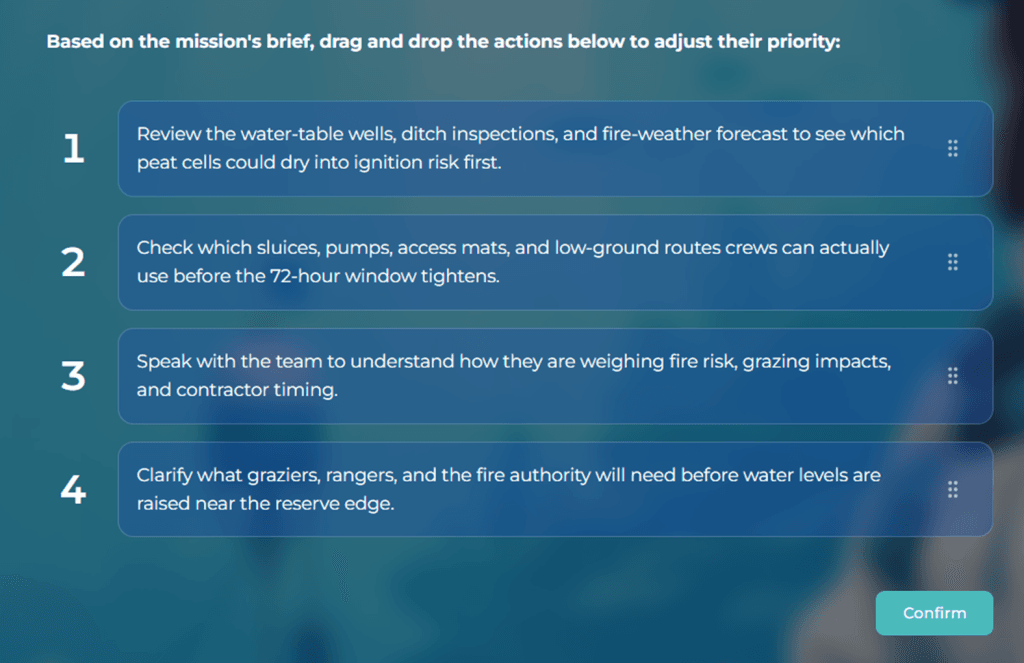

Format and Structure

The format of the game is notably different from Red Rock and Sea Wolf.

It is more text-heavy and requires careful reading and interpretation of scenarios. Candidates are typically presented with around 13 questions to complete within 20 minutes.

Most questions are multiple choice selections, usually offering three answer options. The flow is sequential, meaning that scenarios build on each other rather than being isolated.

Some questions are prioritization questions.

Time management and reading precision become critical, as the challenge is less about calculation speed and more about understanding context and making sound decisions quickly.

Compared to Red Rock and Sea Wolf, this format aligns more closely with a behavioral assessment than a purely analytical game, requiring strong judgment rather than calculation speed. The third module cannot be memorized. But Red Rock and Sea Wolf still determine your baseline performance. If you underperform there, you won’t even get evaluated holistically.

If you want to practice the actual games (Red Rock, Sea Wolf, and Sustainable Future Lab), use our simulation suite here: Complete McKinsey Solve Game Guide and Simulations (all modules explained and playable).

How the Game Works (Decision Logic)

One of the defining features of this game is its branching structure.

Your answers influence how the scenario develops. Instead of facing independent questions, each decision shapes the next situation you encounter. This creates a dynamic environment that mirrors real consulting work and reinforces the idea of a decision-making simulation rather than a static problem-solving test.

Because of this, consistency in reasoning is key. Contradictory choices across questions can signal weak judgment or lack of a clear decision framework.

Candidates need to think not just about the immediate question, but about the broader narrative and implications of their choices.

What McKinsey Is Testing with the Sustainable Future Lab

This new module focuses on a different set of capabilities compared to the traditional Solve games. Unlike the earlier games, which focus on analytical reasoning, this module is best understood as a behavioral assessment embedded within the McKinsey Solve assessment evolution.

Key areas include:

- Team dynamics: how you interact with and manage others in a group setting

- Inclusion and leadership behavior: whether you actively bring others into the discussion

- Decision-making under ambiguity: how you act when there is no clearly “correct” answer

A central tension in many scenarios is the trade-off between:

- Maintaining team comfort and cohesion

- Driving performance and achieving the objective

For example, candidates may face situations where team members are disengaged or silent and need to decide whether to push more assertively or create a more inclusive environment.

The goal is not to select the “nicest” answer, but the one that demonstrates effective judgment in a professional context.

Is the New Solve Game a Situational Judgment Test?

While not officially labeled as such, the new McKinsey Solve module closely resembles a situational judgment test. Candidates are presented with realistic consulting team scenarios and must choose how to respond, balancing performance, collaboration, and judgment.

This type of behavioral assessment is not entirely new in consulting recruiting. Firms like Bain & Company already incorporate similar elements through assessments such as the Sova test, and platforms like TestGorilla are widely used across industries to evaluate comparable decision-making and behavioral traits.

The key difference is that this is embedded within a broader decision-making simulation, rather than a standalone test.

Key Differences vs. Red Rock and Sea Wolf

Compared to the existing Solve games, this new module represents a clear shift.

- Less numerical and optimization-heavy

- More focused on behavioral and situational judgment

- Closer to real consulting team dynamics

While Red Rock and Sea Wolf primarily test analytical thinking and problem-solving, this game evaluates how you operate within a team.

In other words, it tests not just how you think, but how you act.

| Feature | Red Rock | Sea Wolf | Sustainable Future Lab |

|---|---|---|---|

| Focus | Optimization | Pattern recognition | Decision-making |

| Format | Quantitative | Analytical | Behavioral |

| Time pressure | High | High | Moderate |

| Key skill | Math & logic | Data interpretation | Judgment |

In essence, this shifts the Solve from a purely analytical test toward a hybrid model that combines problem-solving with situational judgment testing.

How to Prepare

At this stage, preparation should focus on underlying skills rather than memorizing specific answers.

Key principles include:

- Structured thinking: approach each situation with a clear and logical framework

- Clear prioritization: identify what matters most in the scenario

- Balanced judgment: avoid one-dimensional decision-making

It is particularly important to avoid extremes:

- Being overly passive and avoiding difficult decisions

- Being overly aggressive and disregarding team dynamics

Strong candidates strike a balance between driving outcomes and maintaining effective collaboration.

A Simple Decision Framework for the New Game

Most candidates approach this module by trying to guess the “right” answer.

That is the wrong approach.

The game is not testing isolated answers. It evaluates how you consistently make decisions under ambiguity. To do this well, you need a simple, repeatable mental model you can apply across all scenarios.

Use the following framework:

1. What is the objective?

Start by clarifying what actually matters in the situation.

- Driving the project forward

- Maintaining effective team dynamics

- Balancing both

In most cases, the correct direction is not one extreme. You are expected to move the work forward while keeping the team functional.

2. What is the constraint?

Identify what makes the situation difficult.

- Time pressure

- Missing or incomplete information

- Conflicting stakeholder interests

- Misalignment within the team

Strong candidates explicitly recognize these constraints before acting.

3. What action moves both forward?

Avoid extreme responses.

- Purely aggressive: pushing forward while ignoring people

- Purely passive: preserving harmony but not making progress

The strongest answers are directional but balanced. They move the situation forward while managing the team context.

4. What signal does this send?

Every decision signals how you operate as a consultant.

- Leadership: do you take ownership and provide direction?

- Inclusion: do you bring others into the discussion?

- Judgment: do you adapt to context rather than applying a rigid style?

Your answers should reflect a coherent decision-making style, not random reactions.

Example Scenarios and Answers

Example 1: Silent team member

A team member is not contributing in a discussion.

- Weak approach: ignore them and move on

- Weak approach: call them out directly in a confrontational way

- Strong approach: actively invite their input and integrate it into the discussion

Why: You move the discussion forward while strengthening team engagement.

Example 2: Conflicting priorities

Two stakeholders push for different directions.

- Weak approach: pick one side immediately

- Weak approach: delay the decision to avoid conflict

- Strong approach: clarify priorities and align on criteria before deciding

Why: You create structure and enable a better decision instead of reacting impulsively.

Example 3: Time pressure

The team is running out of time and progress is slow.

- Weak approach: continue discussing without direction

- Weak approach: force a decision without input

- Strong approach: summarize options, propose a direction, and move forward

Why: You demonstrate leadership while still acknowledging team input.

What this means in practice

This module rewards candidates who:

- Think in trade-offs, not extremes

- Act with direction, not hesitation

- Maintain consistency across decisions

If you apply this framework consistently, you will perform significantly better than candidates trying to “game” individual questions.

Common Mistakes: What Current Guides Get Wrong

As more information about the Sustainable Future Lab emerges, current preparation guides are starting to overfit the format or misinterpret what is actually being tested. This leads candidates to adopt the wrong strategies.

Here are the most common mistakes:

Trying to find the “right answer”

Many candidates assume each question has a clearly correct choice.

In reality, most options are plausible. The evaluation is less about picking a perfect answer and more about whether your choices reflect sound and consistent judgment.

Switching styles question by question

Some candidates try to optimize each question individually by changing their approach.

For example:

- collaborative in one scenario

- directive in the next

- analytical in another

This creates inconsistency. Strong candidates apply a coherent decision-making approach across all scenarios.

Optimizing for “niceness”

A common trap is to always choose the most agreeable or team-friendly option.

This often leads to:

- avoiding difficult decisions

- failing to provide direction

- prioritizing comfort over progress

Consulting requires balancing collaboration with execution. Being “nice” without moving things forward is not effective.

Over-indexing on personality test logic

Some guides frame this module as a personality or behavioral test with predefined traits or profiles.

This leads candidates to:

- overanalyze answer patterns

- try to “game” the test

- focus on fitting into a category rather than solving the situation

There is no evidence that this approach reflects how the module is actually evaluated.

The key takeaway: This is not a personality test.

It is a decision consistency and judgment test under ambiguity.

Candidates who treat it as a system to game will struggle. Candidates who apply clear, structured, and consistent thinking will perform well.

Is The New Module Already Part of the Evaluation?

Yes.

What Should You Focus on For Now?

If you want to prepare specifically for Red Rock and Sea Wolf (the two core analytical games) as well as the new Sustainable Future Lab, we’ve built a simulation suite that mirrors the real Solve experience.

The simulations are designed to fully resemble the real assessment, allowing you to practice structured thinking, data interpretation, and optimization under time pressure. Rather than relying on generic tips, the suite trains you on the exact skills McKinsey evaluates, including hypothesis-driven decision-making, prioritization, and numerical reasoning, ensuring you build the analytical and personal foundation required to perform at a top level in the Solve.

Click on the image below to learn more about our Solve Game Guide and Simulation.

McKinsey Solve Game Guide 24th Edition

SALE: $169 / $99

Final Thoughts: The Solve Is Evolving

The introduction of the Sustainable Future Lab, a behavioral, decision-based module, reflects a clear direction: McKinsey is moving toward more realistic assessments of how candidates perform in actual consulting environments.

For candidates, this creates both uncertainty and opportunity.

Those who are aware of these changes early and adapt their preparation accordingly will have a clear advantage over those relying on outdated assumptions.

This article reflects the most recent candidate insights and will be continuously updated as more information about the third game becomes available.