Last Updated on April 29, 2026

The McKinsey Solve Game, the firm’s assessment tool, is now an 85-minute, three-module test that filters out roughly 75-80% of candidates before they ever speak to a consultant. Red Rock, Sea Wolf, and the new Sustainable Future Lab all track process signals behind every click, not just your final answers.

Most candidates treat Solve like a puzzle game and get screened out. They practice in the wrong direction, freeze on the interface, and watch the clock burn while McKinsey logs every backtrack and hesitation as a weak process signal. One failed attempt locks you out of reapplying for 12 to 24 months, depending on the office.

I spent five years at McKinsey as a Senior Consultant and through our prep have coached 9000+ candidates through this exact assessment. Among StrategyCase users who use our suite, the reported pass rate is 89%. This guide walks you through the current 2026 format, the dual-scoring logic most write-ups miss, and the three-layer preparation model that separates passers from the 75-80% who do not make it.

Key Takeaways

- McKinsey Solve runs 85 minutes across three games: Red Rock Study (35 min), Sea Wolf (30 min), and Sustainable Future Lab (20 min, added in early 2026).

- Every click is tracked. McKinsey scores both product (outcome quality) and process (how you got there), which is why “just run more practice games” is the wrong prep approach.

- Estimated unaided pass rate is 10 to 20%. Candidates who train strategy first, then drill mechanics, then run realistic simulations, consistently outperform volume-based prep.

- You cannot retake Solve for 12 to 24 months after a fail, so treating it as “just part of the funnel” is the costliest mistake I see.

What the McKinsey Solve Game Actually Is in 2026

McKinsey retired the old paper Problem Solving Test (PST) in 2019 and replaced it with a gamified assessment built in partnership with Imbellus (later acquired by Roblox in 2020). The test has cycled through multiple formats since. As of April 2026, the live version delivered to all candidates contains three modules:

- Red Rock Game, 35 minutes of ecosystem research, quantitative analysis, and rapid-fire mini-cases

- Sea Wolf Game (Ocean Treatment), 30 minutes of multi-constraint optimization across contaminated ocean sites

- Sustainable Future Lab Game, 20 minutes of behavioral judgment scenarios (new, rolled out March and April 2026)

Total active test time is 85 minutes. Add 5 to 10 minutes of untimed tutorials before each game, plus tech setup. Plan for more than two hours of uninterrupted focus on test day.

The invitation arrives by email after you pass the resume screen. You get a time window, usually 3 to 7 days, to complete Solve at home on your own computer. No interviewer, no proctor, no pause button, no retake inside the lockout window if you fail.

Why McKinsey Replaced the PST

Three reasons, in the order that actually drives the decision inside the firm:

1. Bias reduction. The PST disadvantaged non-native English speakers and candidates from certain academic backgrounds. Gamified simulations score reasoning without literacy penalties and reduce business-background bias.

2. Cost and scale. McKinsey reviews more than 1 million applications per year. A self-serve digital test cuts first-round screening costs by an order of magnitude.

3. Signal quality. The assessment captures process data (click streams, backtracks, time-on-task) that a paper test cannot. That gives recruiters a second dimension to evaluate beyond “did they get the right answer.”

That third point is the one most candidates miss, and it is why generic “do more questions” prep fails. I will come back to it.

If you want to learn more about McKinsey’s rationale for the Solve Game, Fortune spoke with Katy George, McKinsey & Company’s chief people officer, regarding the impact of prevailing labor market trends on the consulting firm’s talent strategy.

Where Solve Sits in the McKinsey Funnel

Solve is the second gate, right after the resume screen and before any human interviewer is involved. Pass Solve and you move to the first-round McKinsey Case Interview and Personal Experience Interview. The assessment is employed across all offices and roles, and sometimes even used to screen candidates for recruiting events.

Fail it and the application cycle ends, typically with an automated rejection email and no feedback on your performance. This is why candidates who plan to “wing it and see what happens” usually regret that choice. One weak 85-minute window ends a recruitment cycle you spent months preparing for.

If you complete the McKinsey Solve Game remotely, feedback typically arrives within 1 to 14 days, depending on office processes and candidate volume. Delays are more common in regions with batch-based recruiting decisions, and rare outliers may wait longer.

How the Solve Scores You (The Part Most Guides Get Wrong)

Solve uses a dual-scoring system: a product score (the quality of your answers) and a process score (how you arrived at them). Both matter. They are weighted roughly equally, which is why the assessment resists brute-force volume prep.

Product signals include:

- Whether your final output is correct or within an optimal range

- How close your quantitative answers fall to the target band

- Whether your choices satisfy all constraints in the brief

- If you improve across different variations of the same game

Process signals include:

- Time spent reading the brief vs jumping to action

- Number of data sources opened (too many and too few both raise flags)

- Back-and-forth between screens (excessive backtracking reads as indecision)

- Consistency of approach across similar tasks

- Use of in-game tools (calculator, notepad) vs silent off-screen work

A candidate who pastes a correct answer from an external calculator but leaves the in-game notepad blank looks analytically sloppy to the scoring engine, even if the number is right. The system is built to watch how a consultant thinks, not just what they conclude.

- Problem identification: The ability to recognize the underlying issue that requires resolution

- Information analysis: The skill to source, filter, and interpret data from multiple inputs

- Strategic solution development: The ability to form, test, and refine hypotheses

- Decision making: The capacity to draw sound conclusions under time pressure

- Adaptability: The agility to adjust approach when conditions change

- Quantitative reasoning: The ability to interpret and apply numerical data.

This is also why candidates who do 50 random online “PSG simulators” often still fail. Volume trains your fingers to click fast. It does not train you to click in a way that signals structured thinking. For a deeper read on why this approach fails at McKinsey specifically, see our piece on the gap between consulting preparation and what firms want.

The Three Current Solve Modules

1. Red Rock Study

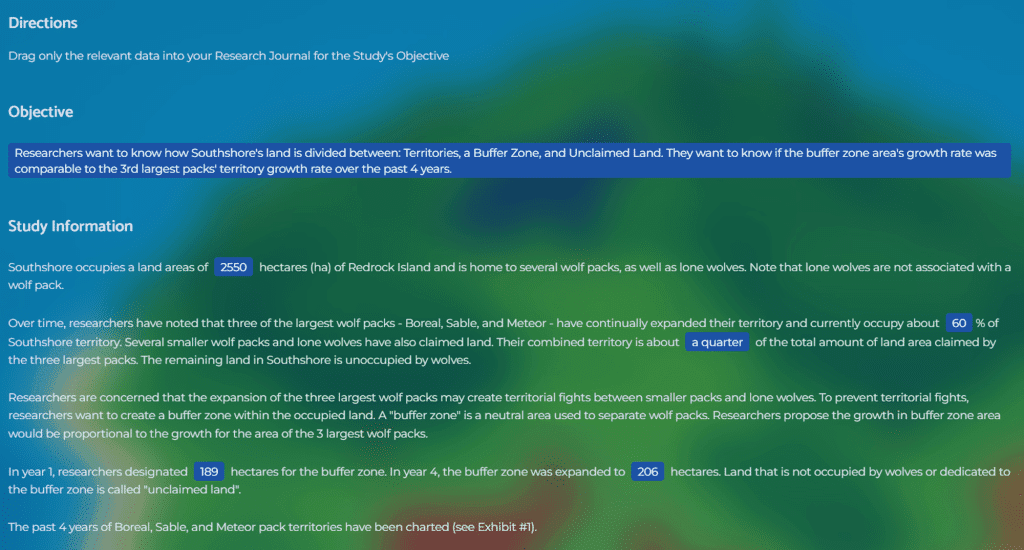

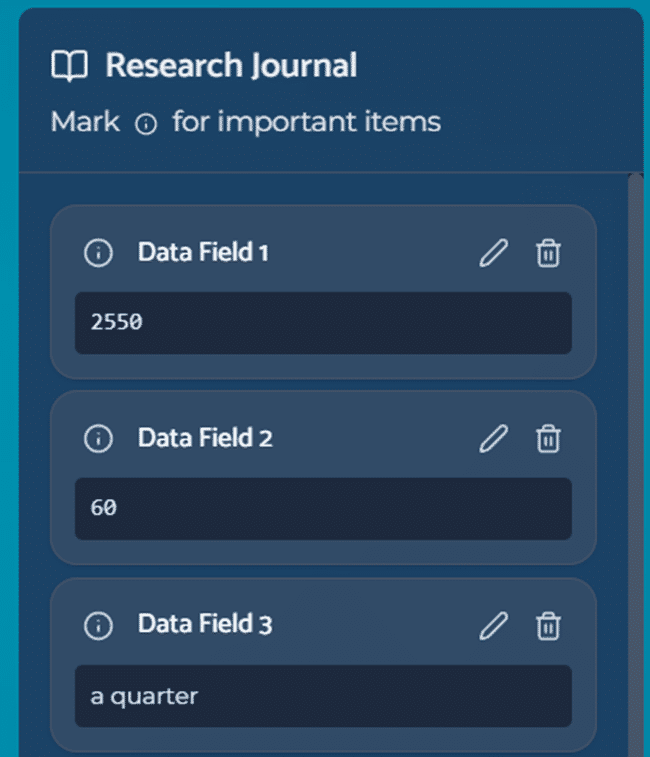

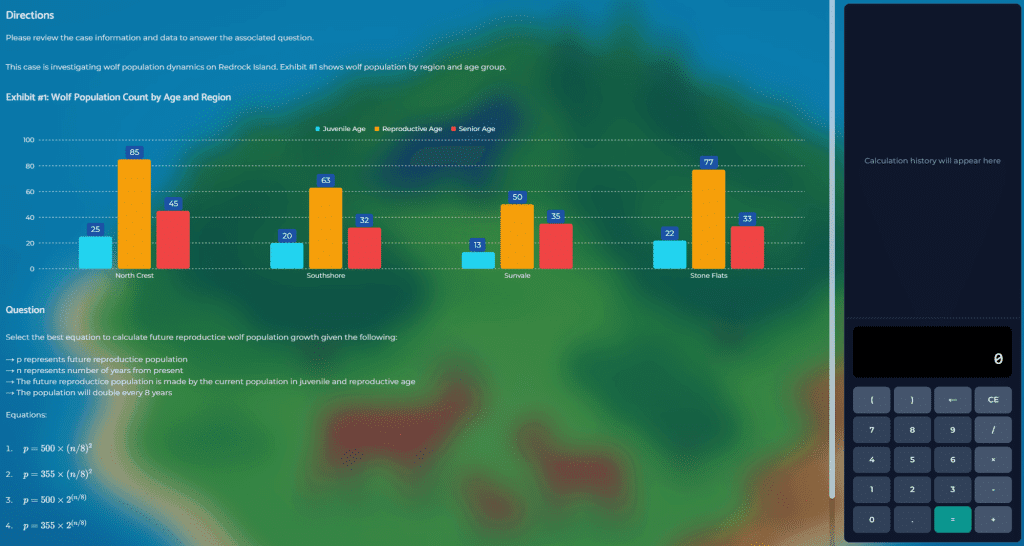

Red Rock is the closest Solve gets to a digital case interview. You play a wildlife researcher studying an endangered species inside a national park, with four distinct stages packed into 35 minutes:

- Investigation Stage, select the most relevant data points from several sources

- Analysis Stage, answer quantitative questions using the data you pulled and an on-screen calculator

- Report Stage, synthesize findings and present them through visual outputs

- Case Section, a rapid-fire round of six analytical mini-cases on species and ecosystem logic

What the scoring engine rewards:

- Reading the research objective before opening any data source

- Selecting and labeling focused data points rather than choosing everything

- Using the in-game calculator and notepad actively

- Setting up streamlined and correct calculations

- Committing to an analytical path and not bouncing between tabs

What wrecks candidates:

- Pulling all data sources without any direction in the first 60 seconds, which flags as unstructured

- Leaving the notepad blank, which gives the scorer no process footprint

- Flipping back to revise multiple times, which reads as indecision

A detailed walkthrough of each stage lives in our Red Rock Game guide.

2. Sea Wolf Simulation

Sea Wolf drops you into an ocean-cleanup scenario with three contaminated sites, a library of microbes with different properties, and a set of constraints. Your job is to pick treatment combinations that clean each site under time pressure.

The game runs in four phases:

- Interpret the brief and configure filters for relevant microbes

- Evaluate each candidate microbe against the site constraints

- Shortlist a pool of viable prospects through forced selections

- Finalize the treatment plan for each of the three sites

Sea Wolf tests multi-variable optimization. You cannot solve it perfectly in 30 minutes, which is deliberate. The design forces 80/20 decisions with explicit stopping rules.

The trap: candidates try to find the “optimal” combination through exhaustive comparison. They burn 15 minutes on Site 1 and rush the other two. A better approach is to set a per-site time budget (10 minutes each), apply filters, pick the best of the viable set, and move on.

The key move: treat each site as its own case, not as one giant optimization problem. The scorer evaluates your approach per site, not across the whole game. Aim for “good enough” (80%+ score) in each Site instead of perfect.

For a deep dive into the Sea Wolf mechanics and strategies, see our dedicated Sea Wolf game guide.

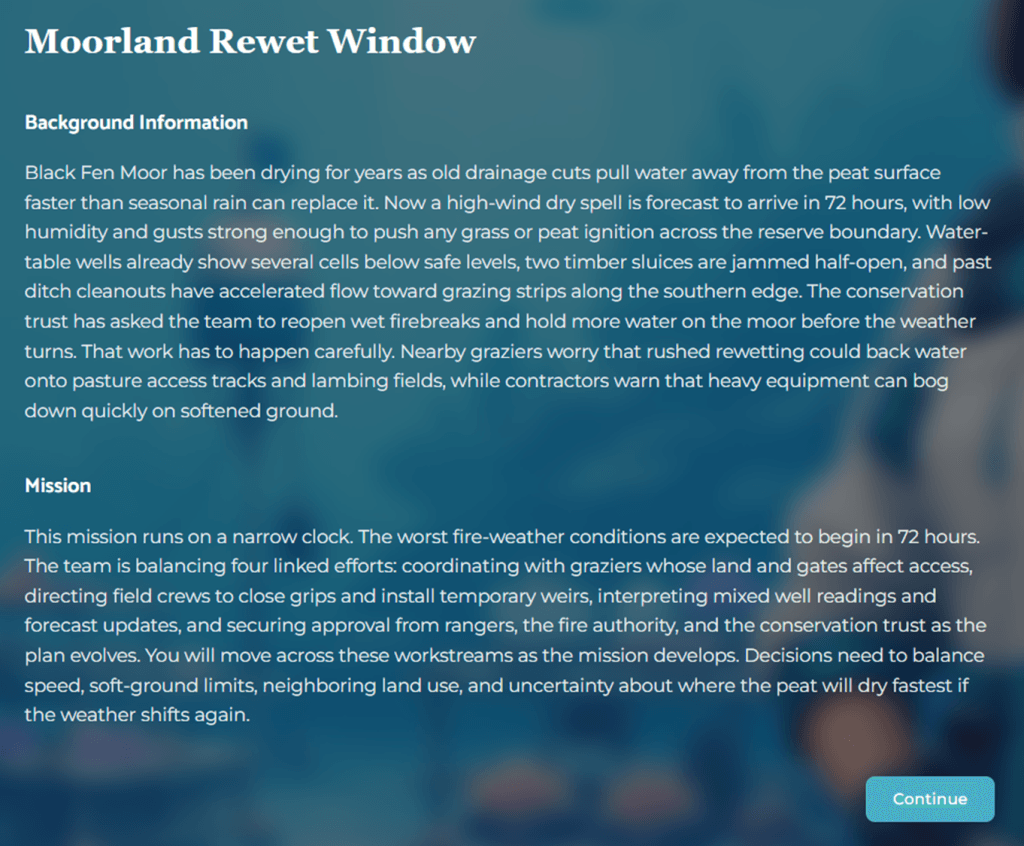

3. Sustainable Future Lab

Added in early 2026 across the globe, Sustainable Future Lab is fundamentally different from the other two games. It is behavioral, not analytical.

You are placed inside an environmental research team. Across 13 sequential questions, you:

- Rank priorities via drag-and-drop at the opening

- Make scenario-based multiple-choice decisions as the project evolves

- Balance trade-offs between stakeholders, timelines, and resources

There are no calculations and no optimization puzzles. The module measures behavioral traits:

- Prioritization under conflicting signals

- Decision-making under uncertainty

- Sticking to a coherent decision logic

- Interpreting messy, incomplete information

- Balancing trade-offs (cost vs speed vs stakeholder fit)

- Team and stakeholder effectiveness

The insider read: this module exists because McKinsey’s analytical games do not surface behavioral maturity. Consulting work is roughly 70% judgment and 30% analysis. Sustainable Future Lab is the firm’s attempt to front-load that filter before you reach the PEI round. If you want to understand how that behavioral screening continues in the human rounds, see our McKinsey PEI guide.

For a scenario-by-scenario walkthrough of Sustainable Future Lab, see our Sustainable Future Lab guide.

The Pass Rate Nobody Talks About

McKinsey does not publish Solve pass rates. Based on candidate reports and discussions since November 2019:

- Unaided pass rate (no structured prep): roughly 10 to 20%

- With random online simulators only: roughly 40 to 50%

- With strategy-first structured prep and realistic simulations: 75 to 90%

The gap between “grinding simulators” and “structured preparation” is the entire point of this article. More reps alone do not fix a weak approach. They make a weak approach faster.

The Three-Layer Prep Model

After watching candidates either pass or get filtered at this stage, the pattern is clear. Candidates who pass build their prep in three layers, not three stacks of practice games.

Layer 1: Strategy Layer (What to Do and Why)

Before you touch a simulator, write a game-by-game strategy playbook (or use one that has been written for you). It should read like a consulting workplan, not a study guide.

For Red Rock:

I will read the research objective for 60 seconds before selecting any data. I will select the key data sources, not everything available. I will budget 5 minutes for the Investigation, 10 minutes for analysis, 5 minutes for the report stage, and 10 minutes for the mini-cases. I will use the in-game calculator for every calculation.

For Sea Wolf:

I will follow the rules of the game that set me up for success, already at the first task. I will set the right filters first, then shortlist, then pick. I will allocate 10 minutes per site and stop when time is up, even if I think I could do better. I will not backtrack after committing to a treatment plan.

For Sustainable Future Lab:

I will rank priorities around stakeholder impact and project viability, not personal preference. I will answer decisively and not revisit earlier answers. Consistency across scenarios matters more than any single response.

This layer is the basis of your approach and game-taking strategy. Skip it, and everything below is wasted effort.

Layer 2: Realistic Simulation Layer (Pattern Recognition)

Now drill the strategies and key skills. Run full-length simulated games, first individually and then back-to-back to simulate the full 85-minute Solve Game.

This layer builds the reflexes needed to execute your strategy without cognitive overhead. A common issue candidates report is encountering the Solve scenarios for the first time during the actual assessment. As a result, they must simultaneously learn the interface and layout while solving the underlying problems.

If you have practiced with simulations modeled after the McKinsey games, you already know what to expect. This allows you to focus on execution rather than orientation, applying your plan with speed and consistency.

Layer 3: Thourough Debrief and Improvements

Run them with reflection built in. After each simulation, look at your score, compare sample answers with your answers. Also, consider your process: What did it look like.?Where did you backtrack? Where did you run over on time? Where did you guess without structure?

A handful of well-run, reflected-on simulations beats 50 random attempts every time. Our McKinsey Solve Game Guide and Simulations are built around exactly this three-layer model.

The Mistakes That Wreck Your Process Score

In order of how often they show up in debriefs with candidates who failed:

1. Skipping the brief. Opening data before reading the objective signals unstructured thinking. Budget the first 45 to 60 seconds of each game for reading or organizing only.

2. Having no plan or strategy. The scoring engine rewards selective, hypothesis-driven actions and decisions.

3. Leaving the notepad empty. No process footprint equals no evidence you thought through the problem. Use the in-game tools.

4. Excessive backtracking. Changing screens multiple times reads as indecision. Select, commit, and move on.

5. External tool reliance. Candidates doing all math on their phone or on scrap paper leave no in-game process signal. The scorer cannot see your reasoning.

6. Ignoring the tutorial. Tutorials are untimed. If you did not practice with a simulation, use them to memorize the interface. Candidates who skip tutorials burn a few minutes per game just orienting themselves.

7. Treating Sustainable Future Lab like the other games. There is no right-answer grid. Consistent judgment across scenarios matters more than any single response.

Test Day Tactics That Actually Move the Dial

Sleep beats one more practice run. Cognitive performance drops 20 to 40% when fatigued. If you are torn between another simulation the night before and an extra hour of sleep, sleep wins.

Start in the morning if you can. Peak cognitive performance for most candidates is 2 to 4 hours after waking. Schedule Solve inside that window when possible.

Test your setup the day before. Run a browser check on the machine you will use. Close background apps. Have water and a snack within reach. Phone off the desk, in another room if you can.

No Zoom, no email, no notifications. Solve takes 85 active minutes plus tutorials. A single interruption can cost you a full game.

Reset between modules. Five deep breaths between games. Do not let Red Rock frustration bleed into Sea Wolf. Each game is scored independently.

Read every instruction twice. Interface quirks differ by module. A missed instruction in Sea Wolf about forced selections has cost more than a few candidates the offer.

How Solve Fits Into the Full McKinsey Application

Solve is one filter in a multi-stage funnel. To put it in context, the full process usually runs:

1. Resume screen. Our consulting resume guide covers what survives.

2. McKinsey Solve (Digital Assessment), often in combination with the resume screening, or, in some offices combined with a single first-round interview.

3. First-round interviews, two to three cases and two to three PEI interviews

4. Second-round (final) interviews, two to three cases and PEIs

Compound the probabilities of passing each round and you land at the roughly 1% overall acceptance rate McKinsey publicly reports. Solve alone removes four out of five candidates. Preparation here has outsized use on the total funnel.

Frequently Asked Questions

How long is the McKinsey digital assessment in 2026?

85 minutes of active test time across three modules (Red Rock, Sea Wolf, Sustainable Future Lab), plus 5 to 10 minutes of untimed tutorials per game. Budget more than two hours of uninterrupted focus from start to finish.

Can I prepare for the McKinsey Solve?

Yes, despite McKinsey’s official line that “no preparation is needed.” Candidates who prep with structured strategy and realistic simulation consistently pass. What does not help is grinding random simulators without reflection.

What is the pass rate for McKinsey Solve?

McKinsey does not publish it. Based on StrategyCase survey data across thousands of users and discussions with case coaching clients, unaided pass rates sit around 10 to 20% (depending on office and recruiting demand). Structured preparation pushes that to 75 to 90% depending on the prep depth.

Is Solve the same in every country?

Yes. The 85-minute three-module version is currently standard across all countries and roles.

What happens if I fail Solve?

You cannot retake it for 12 to 24 months depending on office. A Solve fail typically ends the application cycle for that window with no feedback.

Does McKinsey track my behavior during the game?

Yes. Every click, hover, backtrack, and tool usage feeds the process score alongside your final answers. This is why process-aware preparation outperforms volume-based prep.

Can I use a calculator or external notes?

Use the in-game calculator and notepad as your primary tools. External tools leave no process footprint and weaken your scoring profile. A single off-screen sheet for quick side calculations is fine. Doing all your math off-screen is not.

What to Do Next

The McKinsey Solve assessment is conquerable, but only if you treat it like a structured skill to develop, not a game to play more of. Three actions to take this week:

1. Build your game-by-game playbook first. Strategy before simulation, every time.

2. Drill the mechanics daily for a few days to a week. Working through simulations of the game and gaining familiarity with the interface, questions, and answer patterns beats guessing or just reading about the assessment every time.

3. Reflect after every practice run. What did your process look like, not just how did you score?

If you want the strategy, mechanics drills, and realistic simulations built into one structured program, our McKinsey Solve Game Guide and Simulations walk through exactly that.

You get one shot per 12 to 24 months at Solve. Prep like it matters.

Even when a formal reapplication wait period varies by program and office, a weak result typically means you must return later with a stronger profile and a better story.

Updated April 21, 2026 | By Florian Smeritschnig, Former McKinsey Senior Consultant

15 Responses

Hello, thank you for this introduction. I would like to ask about one thing. In the ecosystem… From all 8 species – they have to survive? Or they can be eaten by predators? I understand how to create the food chain, but still…if you create a food chain and the species do not replicate, they will be eaten by predators…

Dear Lenka,

All species in the food chain (animals and plants) need to survive. The sum of the calories provided by a species – the sum of calories needed for the predator species should always be positive.

Cheers,

Florian

hi Florian,

I only have 3 hours before the PSG is due, is it possible or useful to buy the guide given such a short time limit?

Thank you

Dear Angelina,

3 hours would be enough to read through the strategy section, watch the videos and familiarize yourself with the Excel. While not ideal and we receommend more time to practice, it would still make sense.

Cheers,

Florian

[…] using digital badges to recognise learning and, for example, the consultant company McKinsey uses a game during its recruitment process,” adds Nikoletta-Zampeta […]

Hello Florian Daniel or Colleague,

I am very pleasantly surprised to see this guide that you have masterfully complied. Having tips from insiders is such a confidence boost! I purchased this pack without hesitation and am hoping to try it out before investing in the comprehensive 6h coaching program.

Nonetheless, I wonder if you can email me back by helping me with downloading the actual guide? I encountered a technical issue whereby I completed my payment on my phone, but it became impossible to download it via my laptop. I am very worried as the deadline of the test is approaching so could you please get back to me asap?

Many Thanks

Aspiring Consultant

Hey there,

I have just sent you your documents, which also contain access to the video program.

Please let me know if I can assist further.

Kind regards,

Florian

Hi, how long would you suggest I prepare for the McKinsey digital assessment test after purchasing the digital assessment guide? 2 weeks? 4 weeks?

Hi Emmanuel,

We have candidates that prepare between 2 days and 1 month. The shorter your preparation time, the more your focus should be on learning the proven strategies we outline in our guide (so that you can implement them properly on the game day) and go through and practice the most effective and important tools we provide you with to quickly raise your skill levels.

Obviously, when you have more time on your hands, you can prepare in a much more relaxed way and go deeper with all our exercises and tools. Generally, I would say that 2 weeks is the sweet spot we have seen with our candidates and it is rare for them to fail after they have gone through all exercises and tools, practiced the preparation tips, and have our game-plan and strategies internalized over this time period.

4 weeks would give you enough time to prepare without a rush, and in parallel to the case interview practice. In any case, should something change in the game between your purchase and the testing date, we will send you a new version of the guide and the videos free of charge!

Let me know if you have any further questions!

All the best for your preparation and your application.

Florian

I heard that there are also other games that could be part of the PSG like predicting and preventing an environmental disaster. Are you sure that there are ‘only’ the 2 two games you describe?

Hi Luiz, we talk briefly about these potential other scenarios in our Problem Solving Game Guide. Be aware that they were used during the trial stages in 2018/19 only and none of our more than 700 customers has reported on them pro-actively. From the 80+ customers we interviewed since November 2019, all went solely through the ecosystem game and the tower defense-like game. In the ecosystem game, recent candidates report having done the mountain ridge scenario and not the reef (even though this has no impact on the actual gameplay).

Hi, how do I know if I passed the ecosystem simulation task?

Hi Patricia, on an aggregate level the game looks at both your product score (did you produce a good outcome?) and your process score (did you perform well under stress while working towards the outcome?).

In order to pass the ecosystem simulation, ideally, you reach the threshold McKinsey set for both scores (which is unknown). For the product score, you should be able to test your hypotheses during the game and see if your food chain is actually sustainable and works out. However, for the process score, you can only take a guess. McKinsey and Imbellus record every movement of your mouse, every click, as well as how long you pause, go back and forth in the menus, etc. In short, the more you have worked in a calm and collected manner towards selecting your food chain, the higher the chances to reach a solid process score.

Hi, I have one question, Is McKinsey problem-solving game material included in Mc Kinsey program?

Hi Federico, do you mean the Video Academy or the Interview coaching?