Last Updated on April 16, 2026

The McKinsey Solve Game has become one of the most decisive and least transparent stages of the McKinsey recruiting process. No interviewer. No feedback. Just complex, time-pressured problem solving in a digital environment that many candidates underestimate.

This article is an overview of the Solve Game. If you want deep dives into the current games, please click here to jump straight to game-specific strategies:

- Red Rock Study and Case

- Sea Wolf (Ocean Treatment)

- Sustainable Future Lab Game (fully rolled out in April 2026)

For everyone else, this guide explains what the Solve Game is, why McKinsey introduced it, what skills it actually assesses, and how strong candidates approach it. You will learn what to expect, where most applicants go wrong, and how to prepare efficiently without wasting time on irrelevant drills.

Consider this your orientation briefing before you step into the simulation.

NEW GAME UPDATE (March & April 2026): While the Solve has traditionally consisted of two core games, McKinsey has added a third game, the Sustainable Future Lab game. This new format shifts part of the assessment toward behavioral decision-making and team interaction under ambiguity, reflecting how consultants actually operate on projects. This move signals where the assessment is heading – adding a personal fit component to pure problem solving.

History of the McKinsey Solve Game

The McKinsey Solve Game is a central element of McKinsey’s recruiting process, used alongside case interviews and Personal Experience Interviews (PEI). . It is a digital problem-solving assessment where candidates face complex, unfamiliar scenarios under time pressure, without interviewer interaction or immediate feedback.

Developed in collaboration with Imbellus (aquired by Roblox) and behavioral scientists from UCLA Cresst, the Solve Game places candidates into interactive simulations designed to test how they explore information, structure problems, make decisions, and manage trade-offs. Rather than answering business questions on paper, participants operate in dynamic environments that require building sustainable systems, analyzing populations, or optimizing limited resources.

Earlier versions of the assessment included a tower-defense-style scenario where candidates protected plant species from invasive threats. Current versions focus on system-building and resource-allocation challenges across varied simulated environments.

When McKinsey introduced this game-based assessment, it marked a clear break from the traditional pen-and-paper Problem Solving Test. It also came with the message that the game could not be specifically prepared for. This left applicants feeling uncertain about how to best approach the assessment and was a notable change for candidates accustomed to preparing for weeks or sometimes even months to tackle their case interviews.

Despite being in use for several years, the Solve Game remains one of the least transparent parts of the McKinsey selection process. Official guidance is minimal, expectations are rarely spelled out, and candidates receive little to no feedback after completion. This lack of clarity leaves many applicants uncertain about what they will face, how performance is evaluated, and how to prepare effectively. As a result, otherwise well-prepared candidates often leave the assessment disappointed, not because they lack ability, but because they did not know what to expect or how to approach the challenge strategically.

It quickly became clear that the Solve Game was not immune to preparation or strategy. McKinsey’s early messaging to the contrary functioned more as positioning than reality. Our interviews with some of the first candidates who completed the Imbellus Test in London in November 2019 provided valuable early insight. This marked the first formal use of the Solve Game in live recruiting beyond beta testing. Many of these candidates reported that with a clearer understanding of the game format and evaluation criteria, they would have performed materially better. Several had prepared for the traditional Problem Solving Test instead, only learning about the switch to the Solve Game a week before their assessment.

We used this early feedback as a starting point and systematically collected insights from test-takers across multiple countries over the following years. In parallel, we collaborated with subject-matter experts to translate these observations into concrete preparation methods, gameplay strategies, and even recreated the actual games for our clients to play.

The conclusion was consistent.

Contrary to McKinsey’s initial positioning, effective preparation is not only possible but decisive. Candidates who understand what to expect and apply targeted strategies for each game segment develop relevant skills faster and perform significantly better than peers who approach the assessment blindly.

This article provides a structured overview of the Solve Game. It covers five key areas:

- Why McKinsey transitioned from the traditional Problem Solving Test to a gamified assessment, and what this means for candidates

- The games included in the assessment and the variations reported by test-takers

- The skills actually being evaluated, beyond official communication

- Proven preparation methods, exercises, and tools to raise performance

- Practical test-taking strategies to maximize results under time pressure

StrategyCase.com pioneered in-depth analysis of this assessment format based on firsthand test-taker insights. This foundation has allowed us to continuously refine our methods using feedback from a large and diverse candidate base. The program has gone through 24 iterations, most recently updated in April 2026, and incorporates input from more than 600 test-takers and several game designers.

Introduction of the McKinsey Solve Game

“Imagine yourself in a beautiful, serene forest populated by many kinds of wildlife. As you take in the flora and fauna, you learn about an urgent matter demanding your attention: the animals are quickly succumbing to an unknown illness. It’s up to you to figure out what to do—and then act quickly to protect what you can.”

McKinsey & Company

McKinsey uses the Solve Game to reflect broader shifts in the consulting landscape. Client problems are changing, the firm’s service portfolio is expanding, and consulting careers now require a wider range of skill sets. Alongside generalist consultants, McKinsey increasingly hires data scientists, implementation specialists, product and digital designers, and software engineers. A digital, interactive assessment is a natural response to recruiting digital-native talent for this evolving workforce.

The Solve Game uses environmental and system-based simulations as neutral task settings. McKinsey emphasizes that no prior knowledge is required and that traditional preparation should not provide an advantage. The intention is to create an assessment that is accessible to candidates from any academic or professional background. This marks a shift from the former Problem Solving Test, which favored business and quantitatively trained applicants through a pen-and-paper format. The Solve Game aims to reduce background-driven bias by testing problem solving in unfamiliar, abstract environments. Whether this fully succeeds is debatable, as new forms of bias inevitably emerge. We discuss this further later in this article.

Scale is another key driver. McKinsey receives several hundred thousand applications each year. Manual screening is resource-intensive, and strong candidates are often filtered out early due to rigid resume-based criteria.

The Solve Game addresses this challenge in two ways.

First, administering the assessment to an additional candidate comes at negligible marginal cost. Most applicants can complete it remotely, without occupying local recruiting resources. This creates a highly automated and scalable screening step (sounds exactly like what a top-tier management consulting firm would do). By contrast, the former Problem Solving Test required on-site administration and significant staff involvement.

Second, low marginal testing costs allow McKinsey to evaluate a broader pool of applicants beyond those who pass initial resume screens. Candidates who may not meet traditional resume thresholds can still demonstrate their potential through actual problem-solving performance, increasing the likelihood that overlooked talent reaches interview rounds.

To build the Solve Game, McKinsey partnered with a specialist game-based assessment developer, later acquired by Roblox, with the ambition to redefine how human potential is measured. This raises an important question.

Does the Solve Game live up to this ambition and fulfill its role as an effective screening tool for consulting applicants?

The Format of the Solve Game

The current Solve Game format consists of three games completed within a total of 85 minutes. Based on consistent test-taker reports and our data, all candidates currently face the Red Rock Simulation at 35 minutes, the Sea Wolf Simulation at 30 minutes, and the Sustainable Future Lab at 20 minutes.

This structure places strong emphasis on time management. Candidates must balance exploration, analysis, and decision-making while ensuring all games are completed within the fixed time window.

In the following sections, we provide a detailed breakdown of each game, along with practical strategies to manage time effectively and maximize performance.

The Scoring of the Solve Game

At its core, the Solve Game mirrors the logic of consulting case interviews. Candidates must identify problems, gather and interpret data, make decisions under time pressure and incomplete information, and translate findings into actionable solutions. The difference lies in the format. Instead of an interviewer-led discussion, the process is captured digitally and evaluated through algorithmic scoring.

- Problem identification: The ability to recognize the underlying issue that requires resolution

- Information analysis: The skill to source, filter, and interpret data from multiple inputs

- Strategic solution development: The ability to form, test, and refine hypotheses

- Decision making: The capacity to draw sound conclusions under time pressure

- Adaptability: The agility to adjust approach when conditions change

- Quantitative reasoning: The ability to interpret and apply numerical data.

To evaluate these dimensions, the Solve Game uses a dual-scoring system.

The product score measures outcome quality. It assesses whether game objectives were achieved, such as building a sustainable system, reaching correct analytical conclusions, or optimizing constrained resources.

The process score evaluates how results were achieved. Every interaction is tracked and translated into behavioral data points. This captures whether candidates follow structured approaches, test assumptions, revisit earlier decisions appropriately, or proceed in an ad hoc manner.

This scoring logic has several important implications for candidates.

First, success is not defined solely by arriving at the correct answer. How you structure the problem, explore data, and sequence decisions is equally important. Candidates who apply disciplined problem-solving processes are rewarded even when outcomes are not perfect.

Second, the assessment captures behavior under pressure. It evaluates decision speed, response to incomplete information, and the ability to maintain structure in dynamic environments.

Finally, constant behavioral tracking can increase perceived pressure, as every action contributes to the evaluation.

At first glance, this process-focused design appears difficult to prepare for. However, our data shows that the range of viable solution paths in the current games is relatively narrow. Effective strategies and step-by-step approaches can be learned and practiced, leading to consistent performance improvements.

Current Roll-out and Scope of the McKinsey Solve Game

It’s all fun and games until your score actually determines your future McKinsey career.

A common question from candidates is whether participation in the Solve Game is mandatory. In nearly all cases, the answer is yes.

The assessment was initially piloted between 2018 and 2019 with several thousand candidates across multiple countries, running in parallel with the former Problem Solving Test. This phase focused on beta testing, data collection, and calibration rather than formal evaluation. McKinsey consultants were also invited to complete trial versions to build internal benchmark data.

Today, the Solve Game is fully embedded in McKinsey’s global recruiting process. Based on our data and consistent candidate reports, it is now used in virtually every country with a McKinsey office. The worldwide rollout was completed during the 2020 recruiting cycle and has since become a standard screening step for the vast majority of applicant profiles.

Since 2022, the Solve Game has also been required for selected recruiting events and early-access programs, such as leadership and diversity initiatives, further extending its reach beyond standard office applications.

In terms of roles, the assessment applies broadly across consulting tracks, including generalist consulting, implementation, digital, research, and analytics roles.

Senior and experienced hires are often exempt from the Solve Game requirement, as their evaluation typically relies more heavily on professional track record and interviews.

Timing of the Solve Game in the McKinsey Recruiting Process

In some regions, candidates are informed of their assessment deadline earlier, sometimes several weeks in advance. In rare cases, offices may still require candidates to complete the Solve Game on-site, occasionally on the same day as case interviews.

Post-Game Process: Waiting for Results

In some offices, the Solve Game is completed in conjunction with first-round interviews. In these cases, game performance is evaluated alongside interview results rather than as a standalone screening step.

Requirements to Pass the McKinsey Solve Game

McKinsey has conducted extensive calibration and beta testing with large pools of candidates and internal staff to refine the Solve Game’s scoring models and difficulty balance. As more applicants become familiar with the assessment format and preparation efforts intensify, average performance naturally rises. To counteract score inflation and preserve differentiation between candidates, the Solve Game is regularly updated and adjusted.

The Skills Assessed by the McKinsey Solve Game

The McKinsey Solve Game does not test specific business knowledge. Instead, it evaluates the same fundamental cognitive abilities and problem-solving skills that traditional case interviews and written assessments were designed to measure, but in a gamified and data-rich environment. To perform well, candidates must:

- Understand what each game is actually testing

- Apply effective preparation methods and repeatable problem-solving strategies

The Core Skills

The games are designed to build a multidimensional profile of each candidate’s cognitive and behavioral capabilities. Every interaction is recorded and analyzed, contributing to both a product score and a process score. The assessment does not only evaluate outcomes. It also measures how candidates think, adapt to new information, and correct course when facing uncertainty.

To score well, candidates must optimize both result quality and problem-solving approach, while understanding the drivers behind each game’s scoring logic.

While McKinsey does not publicly disclose its full evaluation framework, consistent test-taker data and expert analysis indicate that the Solve Game primarily assesses:

- Critical thinking: Forming sound judgments from qualitative and quantitative information

- Decision making: Selecting effective courses of action among competing options

- Metacognition: Using structured strategies such as hypothesis testing and note-taking

- Situational awareness: Recognizing interdependencies and anticipating scenario outcomes

- Systems thinking: Understanding multi-layer cause-and-effect relationships and feedback loops

- Cognitive processing: Absorbing, integrating, and recalling new information efficiently

- Adaptability: Adjusting strategies as conditions change

- Creativity: Developing novel and effective solution approaches

Demonstrating Key Skills

- Critical thinking: Systematically filter large amounts of information, discard irrelevant data, analyze key inputs, and synthesize findings into coherent solutions across both qualitative and quantitative tasks.

- Decision making: Form timely, evidence-based conclusions. The game tracks where you spend time, how you prioritize exploration versus action, and how you translate data into recommendations.

- Metacognition: Apply structured problem-solving habits such as hypothesis-driven exploration, note-taking, and deliberate testing of assumptions. These strategies become visible through your navigation patterns and tool usage.

- Situational awareness: Maintain a clear grasp of objectives, constraints, available options, and remaining time, ensuring that decisions align with the overall task.

- Systems thinking: Recognize interdependencies between variables, such as matching ecosystem conditions to species requirements or anticipating downstream effects of resource allocations.

- Adaptability: Adjust strategies when new information emerges or conditions change, particularly in dynamic scenarios such as the Sea Wolf Simulation.

- Cognitive processing: Absorb, integrate, store, and retrieve information efficiently throughout the assessment.

- Creativity: Develop effective and sometimes unconventional approaches when standard solution paths fall short.

The Current Games of the McKinsey Solve

Before each timed game, candidates complete an untimed tutorial. These walkthroughs introduce game mechanics, objectives, and available tools. Candidates can take as much time as needed to understand the setup before starting the clock. This design ensures that performance in the timed section reflects problem-solving ability rather than confusion about rules or interfaces.

Once a timed game begins, it cannot be paused. This increases the importance of preparation, focus, and time management. Candidates must balance exploration, analysis, and decision-making while staying aware of the remaining time, as interruptions or trial-and-error approaches quickly become costly.

Evolution of Game Scenarios

Since its introduction, the Solve Game has consistently used abstract environmental and system-based scenarios, while the specific games themselves have evolved over time. Earlier versions included Ecosystem Creation and Plant Defense scenarios, followed by temporary additions such as disease-identification and migration-planning simulations used primarily for calibration and internal benchmarking.

As with McKinsey’s broader assessment philosophy, new game variants are periodically introduced to test difficulty balance, scoring consistency, and resistance to overfitting through preparation. These calibration games are typically not used for formal candidate evaluation until fully validated.

At present, all candidates face three scenarios: the Red Rock Simulation, the Sea Wolf Simulation, and the Sustainable Future Lab Simulation.

Red Rock Game

In other words, Red Rock is the Solve Game’s closest equivalent to a digital case interview. It tests whether you can run a structured analysis under time pressure without external guidance.

The Study Section

The Study Section follows a three-stage workflow: Investigation, Analysis, and Report. Together, they replicate the consulting process of gathering data, analyzing it, and communicating conclusions.

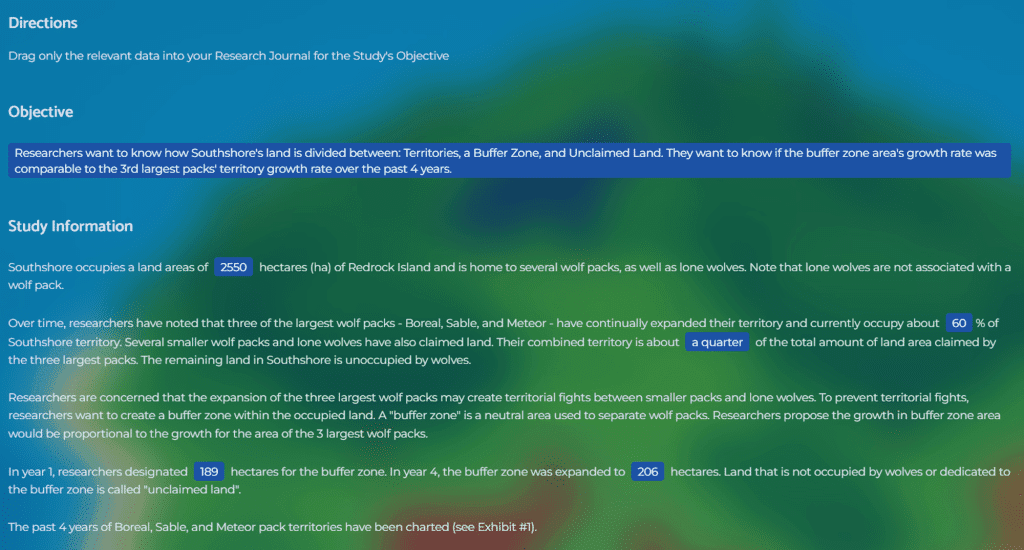

Investigation Stage

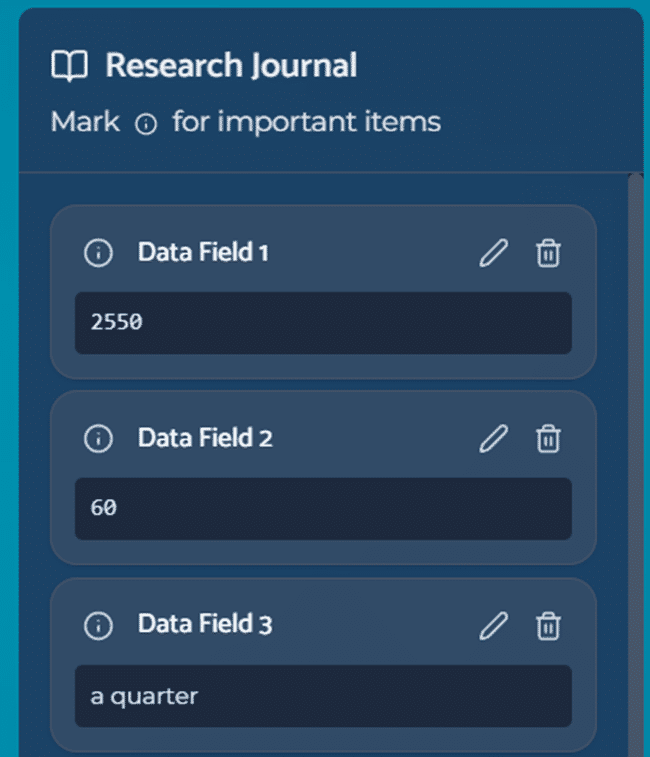

You receive a research objective alongside multiple data sources such as text passages, tables, and charts. Your task is to identify relevant information and transfer it into your on-screen Research Journal.

The key challenge is selectivity. Strong candidates filter aggressively, capture only decision-relevant data, and label it clearly. Weak candidates either collect everything or miss critical inputs. This stage directly feeds your process score, as the game tracks how you explore, prioritize, and document information.

Analysis Stage

You answer a series of quantitative questions based on the collected data. An on-screen calculator is available, but the real difficulty lies in setting up the right equations, choosing the correct inputs, and avoiding unnecessary recalculation. You can move between Investigation and Analysis, but excessive back-and-forth consumes time and signals an unstructured approach.

This stage tests quantitative reasoning, equation setup, and your ability to execute math reliably under time pressure.

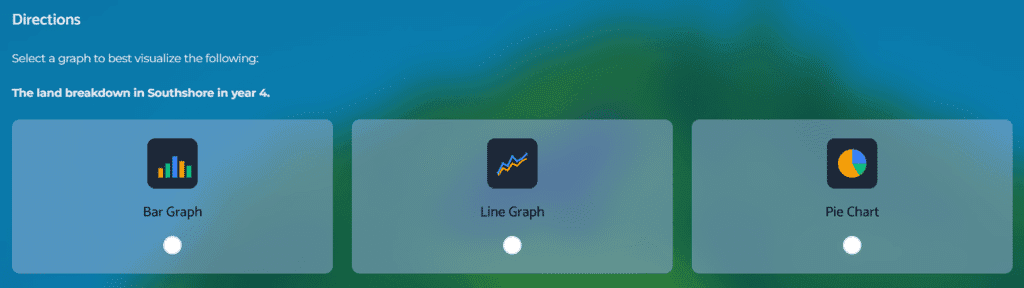

Report Stage

You synthesize your findings into a short written report and select an appropriate visual representation of your results. This mirrors the consulting requirement to translate analysis into clear, decision-ready communication.

Candidates often underestimate this step. Rushing the report after overspending time upstream is a common failure pattern. Clean time allocation across all three stages is therefore essential.

The Case Section

In 2023, McKinsey introduced an additional mini-case component to Red Rock. This section presents a set of quantitative reasoning questions based on new data, distinct from the Study Section. You now complete both the Study and Case Sections within the same 35-minute time limit.

This update materially increased difficulty.

Candidates must manage two different analytical contexts back-to-back while maintaining accuracy and time discipline. Those who enter without a predefined pacing strategy often complete one part well and rush the other.

The Case Section primarily tests rapid data interpretation, quick equation setup, and error-free execution. It is less about complex math and more about speed, structure, and consistency.

Why Preparation Matters Even More Here

Red Rock rewards candidates who already know:

- how to filter data efficiently

- how to structure a research journal

- how to set up equations quickly

- how to allocate time across stages

- how to avoid unnecessary backtracking

Red Rock tests whether you can filter signal from noise, structure quantitative analysis, and communicate conclusions with clarity. These are foundational consulting skills. Candidates who approach the game as a simple puzzle often struggle. Those who treat it like a mini consulting engagement perform materially better.

The introduction of Red Rock marked a shift in McKinsey’s assessment philosophy. Earlier Solve Game scenarios focused on abstract system-building and dynamic simulations. Red Rock moves closer to a classical problem-solving test, resembling digital case formats used by other top-tier firms, while still capturing richer behavioral data through gameplay mechanics.

Strategy for the Red Rock Study Section

The Red Rock Study Section is best approached as a self-guided consulting case. You are given an objective, a set of data sources, and limited time. Your task is to extract the right information, run a focused analysis, and deliver a clear conclusion. Success depends less on raw math ability and more on disciplined workflow and time control.

We recommend a four-step approach.

1. Understand the objective before touching any data (Investigation Stage)

Start by carefully reading the research objective. Do not open data sources immediately. First clarify:

- What’s the overall context and objective?

- Based on this objective, what decision or questions must be answered in later stages?

- Which metrics or comparisons are likely to matter?

Strong candidates treat the objective like a client question. A clear mental problem statement prevents wasted exploration later. Don’t jump into the data selection before having absolute clarity about the objective and context.

2. Target data selection, not data collection (Investigation Stage)

In the Investigation Stage, your goal is not to gather everything. It is to gather only what is decision-relevant.

- Select data sources with a hypothesis in mind

- Drag only key figures (e.g,. initial year and last year values), constraints, and important definitions into your Research Journal

- Label notes clearly and highlight the most important data points so they are usable during analysis

- Avoid exhaustive reading or random clicking

The game tracks how you explore and what you choose to record. Selectivity and structure directly support your process score and save critical time. Move logically through the text and provided exhibits.

3. Execute analysis with pre-set time and calculation discipline (Analysis Stage)

In the Analysis Stage, the main risk is not math difficulty (you have a calculator for that) but inefficient setup.

- Translate each question into a simple equation before calculating

- Select the right data from your Research Journal

- Become familiar with the drag-and-drop calculation functionality

- Use the calculator only after the equation is clear

- Avoid repeated recalculation or backtracking

Set a time expectation per question and move on once you have double-checked your approach and executed the calculations.

4. Communicate findings, do not re-analyze (Report Stage)

In the Report Stage, your job is synthesis, not discovery.

- Fill report statements directly from completed analysis

- Select the graph that best supports the conclusion

- Populate the graph with the right values from your Research Journal (don’t drag the correct value into the wrong field)

Many candidates lose points by entering the Report Stage with unclear expecations of the task.

Strategy for the Red Rock Case Section

The Red Rock Case Section functions as a rapid-fire sequence of 6 mini-cases, some with several questions tied to a shared context. The difficulty level is comparable to the Study Section, but the workflow is compressed. Investigation, analysis, and answering happen in a single continuous stage.

Success in this section depends on swift data targeting, clean equation setup, and decisive execution.

Identify what data is needed before opening sources

Each mini-case starts with a short prompt. Before exploring any data, clarify what the question is asking and which variables are required. This prevents unnecessary reading and random clicking.

Extract only decision-relevant data

Open charts or tables with intent. Pull only the figures required to solve the question and ignore contextual information that does not feed into the calculation.

Set up the equation first, calculate second

Translate the question into a simple formula before touching the calculator. This reduces errors and speeds up execution.

Execute using the interface efficiently

Answers are submitted through drag-and-drop fields or dropdown selections. Familiarity with these mechanics matters. Hesitation here costs time with no analytical benefit.

Treat each mini-case as independent

Do not carry assumptions from prior questions unless explicitly stated. Reset your thinking at the start of each prompt.

The Case Section rewards candidates who combine structured quantitative reasoning with interface fluency and time discipline. Those who have practiced both strategy and real-game execution typically find this part relateively straightforward rather than stressful.

Enhancing Quantitative Reasoning for Red Rock

Strong performance in Red Rock depends heavily on fast and reliable quantitative setup and execution. The math itself is not complex. The challenge lies in extracting the right data, setting up equations quickly, and delivering correct answers under time pressure (don’t underestimate using the drag-and-drop functionality under stress).

Practice with case-style math questions

Regular exposure to case interview math builds the exact skill set Red Rock requires. Data extraction from charts, tables, and exhibits, followed by rapid insight generation, is identical to what you will face in consulting case interviews and in the Red Rock Study and Case Sections.

Train equation setup, not just calculation

Most errors come from poor equation setup, not from arithmetic. Focus on quickly translating questions into simple formulas. Pay particular attention to percentages, growth rates, and averages, as these are the most frequent operations in the game.

Use quantitative reasoning drills if simulations are not available

If you do not yet have access to full Solve Game simulations, GMAT-style quantitative reasoning questions are an okay substitute. They train structured problem solving, data interpretation, and time-based execution under pressure.

Balance speed with accuracy

You must move fast, but not carelessly. Set time expectations per question and commit to decisions once you reach a defensible answer. If a question stalls, move on.

Master data extraction from visuals

Practice pulling key numbers and trends from charts and tables efficiently. Red Rock rewards candidates who can spot relevant data quickly without reading or copying everything.

Use tools deliberately

Ideally, become comfortable with the on-screen calculator before test day. The tool is simple, but hesitation or repeated recalculation wastes valuable time.

Simulate time pressure

Timed mock sessions build pacing discipline and reduce stress during the real assessment. The goal is to make structured quantitative execution feel routine.

By developing these capabilities, you approach both the Red Rock Study and Case Sections with confidence, speed, and control.

The Sea Wolf Game

Sea Wolf is the final module in the McKinsey Solve Game and runs for 30 minutes. It is now a fully standardized production game encountered by all candidates in the current two-game Solve sequence.

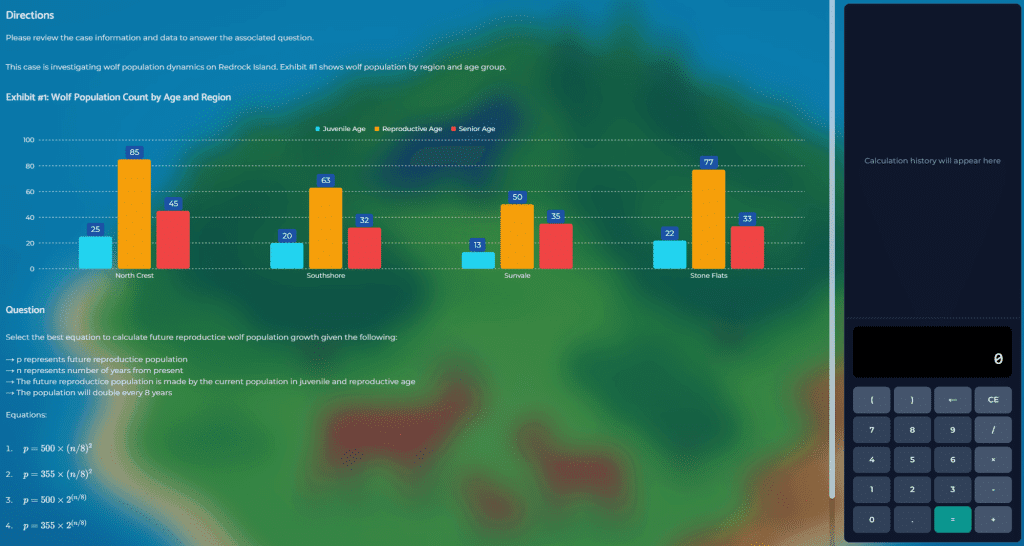

The simulation places candidates in a microbial ocean-cleanup scenario. Behind the storyline, Sea Wolf is a structured multi-constraint optimization task. Candidates must design treatment solutions for three contaminated sites by filtering data, narrowing feasible options, and selecting optimal combinations under time pressure.

Each site follows the same mechanics. Only the input parameters change. This repetition tests whether candidates learn from earlier rounds, accelerate their decision process, and maintain time discipline across the full module.

What Sea Wolf Tests

The Sea Wolf Simulation is designed to assess how candidates translate complex requirements into structured decision logic. Each site presents a set of environmental constraints expressed through numerical ranges and desired or undesired traits. Strong candidates systematically convert these requirements into clear filtering and selection criteria rather than relying on intuition or trial-and-error exploration.

A second core dimension is the ability to filter viable options under multiple simultaneous constraints. Candidates must eliminate infeasible choices quickly, narrow the solution space efficiently, and maintain a clean shortlist of promising options. This tests whether you can manage complexity without becoming overwhelmed by data volume.

Sea Wolf also evaluates quantitative optimization skills. Final solutions depend on averaged attribute values across selected microbes, requiring candidates to anticipate how individual selections influence the overall outcome. The challenge is not advanced math, but setting up the right mental equations and making corrective selections when averages drift away from target ranges.

Because no option is ever perfect, the game deliberately forces decision-making under imperfect conditions. Candidates must recognize when further optimization yields diminishing returns and commit to a strong solution rather than chasing an unattainable ideal. This ability to balance analytical rigor with pragmatic decision-making closely mirrors real consulting work.

Finally, the repeated three-site structure tests time management and learning agility. Candidates are expected to refine their approach from site to site, increase speed without sacrificing accuracy, and maintain discipline across the full 30-minute module.

Sea Wolf Game Flow and Strategy Summary

The Sea Wolf Simulation follows a fixed four-phase workflow repeated across three contaminated sites. While the environmental storyline changes, the mechanics remain identical. Strong performance comes from executing a consistent decision logic rather than improvising.

Phase 1: Interpret site requirements and configure filters

What happens

Each site presents treatment requirements through numerical attribute ranges and desired or undesired traits. Before any microbes appear, you must select exactly two characteristics to define filtering criteria.

Winning strategy

Start by translating site requirements into filtering logic. Choose characteristics that best eliminate unfit microbes early. Do not jump to solution-building. The game evaluates whether you can convert requirements into structured selection rules.

Phase 2: Evaluate microbes and shortlist viable candidates

What happens

The microbial pool becomes visible, each with attribute values and traits. You must remove microbes that violate attribute ranges or contain undesired traits while ensuring at least one candidate carries the desired trait.

Winning strategy

Filter aggressively and systematically. Eliminate infeasible options first, then narrow to a small, realistic candidate set. Poor filtering at this stage guarantees weak final outcomes.

Phase 3: Build the prospect pool through forced selections

What happens

You start with six microbes and repeatedly choose one out of three presented candidates until your prospect pool contains ten microbes.

Winning strategy

Think in portfolios, not individual picks. Evaluate how each choice shifts the future average attributes of your final three-microbe solution. Avoid undesired traits where possible, prioritize the desired trait if missing, and accept that no pick will be perfect. Optimize the pool, not the single microbe.

Phase 4: Finalize the treatment

What happens

From your prospect pool, you select three microbes whose averaged attributes fall within site target ranges and whose traits best match site preferences.

Winning strategy

Stop searching for a perfect solution. Once a high-quality configuration is available, commit. Over-optimization and late indecision are common causes of time failure.

Why Candidates Struggle

Unprepared candidates typically:

- Filter inconsistently

- Optimize individual picks instead of the final solution

- Spend too long on the first site and rush the rest

Prepared candidates enter knowing the workflow, filtering logic, and decision sequence. They spend test time executing, not discovering mechanics.

Sustainable Future Lab (New in April 2026)

The Sustainable Future Lab is a new module within McKinsey Solve that fundamentally changes how candidates are assessed. Instead of focusing on analytical problem-solving or pattern recognition, this module evaluates how candidates make decisions in complex, evolving, and ambiguous environments.

A shift from solving problems to operating systems

In contrast to traditional case-like assessments, candidates are not given a clearly defined problem with a single objective. Instead, they are placed into a live mission scenario where they must operate within a system that includes multiple workstreams, stakeholders, and constraints. The task is not to find the “right answer,” but to continuously make decisions that move the mission forward.

The environment is intentionally realistic. Candidates face time pressure, incomplete information, operational limitations, and conflicting stakeholder interests. These elements are not distractions. They are the core of the assessment.

Sequential decision-making in a dynamic environment

The game unfolds through a series of linked decisions. Each choice influences the context of what comes next, and new information is introduced throughout the mission. Constraints tighten, risks evolve, and priorities shift over time.

This creates a dynamic environment where candidates must adapt their decisions without losing coherence. They are not optimizing a fixed system once, but managing a system that continuously changes.

No correct answers, only consistent logic

A defining feature of the Sustainable Future Lab is that there are no clearly correct answers. Each option presented is plausible and comes with trade-offs. The assessment does not reward isolated decisions, but rather evaluates the pattern of decisions across the entire scenario.

Strong performance comes from applying a consistent decision logic. Candidates who change their approach from one situation to the next are penalized, even if individual answers appear reasonable. The key is to maintain a clear and stable way of thinking while adapting to new inputs.

What the module is designed to test

The Sustainable Future Lab introduces a behavioral layer to McKinsey Solve. It assesses how candidates:

- make decisions under constraints such as time and uncertainty

- manage trade-offs between speed, risk, and stakeholder alignment

- think in systems, understanding dependencies and downstream effects

- prioritize and sequence actions effectively

- navigate stakeholder dynamics while maintaining progress

These are the core skills required in real consulting work, where problems are rarely clean and decisions must be made despite ambiguity.

Implications for your preparation

This shift in assessment has direct implications for how candidates should prepare. Traditional preparation methods focused on calculations, frameworks, or pattern recognition are not sufficient.

Success in the Sustainable Future Lab depends on the ability to apply a clear, structured decision-making approach consistently across the entire mission. Candidates must be able to balance competing priorities, adapt without overreacting, and maintain forward momentum without breaking the system they are operating in.

The Sustainable Future Lab is not testing knowledge, personality, or isolated judgment. It is testing whether a candidate can operate effectively within a complex system under pressure, making consistent, well-reasoned decisions as conditions evolve.

This makes it the closest representation within McKinsey Solve of what consulting actually requires.

We have compiled a deep dive on the Sustainable Furure Lab Solve Game here.

The Former Games of the McKinsey Solve Game

Ecosystem Creation

Plant Defense

Disease Identification

Disaster Identification

Migration Planning

Preparing for the McKinsey Solve Game

Why Preparation is Non-Negotiable

The baseline pass rate is low. The baseline pass rate is low. Candidates who walk in unprepared routinely underestimate the time pressure, the need for structured decision-making, and how quickly small mistakes compound. Preparation fundamentally shifts the odds. If you have never seen the games before or do not know which actions to take at each stage, you will spend valuable time figuring things out while the clock is running. The goal is to enter the first game already knowing how to navigate the environment, what to prioritize, and how to commit to decisions.

The downside is significant. In many candidate tracks, failing the assessment can materially delay your recruiting timeline. Even when a formal reapplication wait period varies by program and office, a weak result typically means you must return later with a stronger profile and a better story.

The game rewards learnable behaviors. The Solve Game is built to measure higher-order thinking: structuring, hypothesis testing, prioritization, and decision quality under uncertainty. These are trainable skills. The best candidates do not “wing it,” they execute a method.

Digital familiarity is an advantage, unless you neutralize it. Comfort with interfaces, drag-and-drop mechanics, and time-based navigation can create an edge when playing the games. The fastest way to remove this bias is targeted practice in a realistic environment.

The Preparation Model That Works

Most candidates fail because they do either strategy without execution, or execution without strategy. You need both.

Step 1: Build the strategy layer (what to do and why).

This is where most generic advice stops. You need a clear mental model of each game’s objective, scoring drivers, and the common traps. That is exactly what our strategy guide and video course are designed to provide: step-by-step approaches that are robust across variations, plus the logic behind them so you can adapt under pressure.

Step 2: Convert strategy into muscle memory (how to execute under time pressure).

Knowing the right approach is not enough if you cannot execute it quickly and calmly. This is why realistic simulations matter. They train interface speed, decision sequencing, note-taking discipline, and time management. In other words, they turn “I understand” into “I can deliver in 65 minutes.” (usually much faster after practice)

What “Good Preparation” Actually Looks Like

Use these principles to guide your practice, regardless of which resources you use:

Master the objective first, then optimize. Start every game by clarifying the goal, constraints, and success metric. Candidates lose points by optimizing the wrong thing.

Operate with time-boxes and stopping rules. Decide in advance how long you will spend exploring, analyzing, and executing. If a decision exceeds your time-box, choose the best available option and move.

Be hypothesis-driven, not exhaustive. Explore with intent. Form an initial hypothesis, gather only the data needed to validate it, then iterate. Random clicking and “reading everything” wastes time and weakens process signals.

Document like a consultant, not a student. Keep notes short, structured, and actionable. Label key variables, assumptions, and constraints so you can act quickly without re-reading.

Practice the interface, not just the logic. Drag-and-drop accuracy, navigation speed, and tool familiarity directly influence performance because the timed games cannot be paused. If you don’t use a simulation beforehand, take extra time in the tutorials to practice.

Recommended Path

If you want the highest probability of success with the least wasted effort, follow this order:

- Learn the strategies: Use the guide and videos to understand what each game tests, how scoring works, and which decisions matter.

- Practice realistically: Use full simulations to build speed, execution discipline, and confidence under real timing.

- Refine with feedback: Identify where you lose time or make repeat errors, then re-run with specific improvement targets. Our simulations come with detailed feedback report for exactly that reason.

Test-taking Tips and Advice

Do not chase “the right answer,” execute a winning process: Every candidate sees different numbers and variations. Trying to replicate outcomes from other people’s experiences is a trap. Focus on clean problem definition, hypothesis-driven exploration, and consistent decision logic.

Work forward, not backward: The Solve Game rewards coherence. In all games, verify your inputs and assumptions before you commit, then execute decisively. Avoid frequent backtracking (especially in the Red Rock game when you switch from the Analysis Stage back to the Investigation Stage), as it burns time and often signals an unstructured approach.

Use 80/20 decision-making with explicit stopping rules: You rarely have time for perfect optimization, especially in the Sea Wolf game. Aim for the best answer you can justify within the constraints. Set clear cutoffs, for example: “I will explore for X minutes, then decide,” or “I will test up to Y options, then lock the best-performing one.” Sometimes, a 100% perfect outcome is not possible in the Sea Wolf game.

Treat the tutorial as free points: The tutorials are untimed. Use them to understand mechanics, scoring drivers, and available tools before the clock starts. Most candidates waste this advantage and pay for it later with avoidable trial-and-error. If you train with our game simulations, you will already know how the games operate, where to click, how to use drag-and-drop mechanics, and how to apply the right strategies, allowing you to execute confidently and feel fully at home in the assessment.

Read instructions like a contract: Many failures come from missing a constraint or objective, not from weak math. Before you act, confirm: objective, constraints, success metric, and what exactly is being asked. A single missed detail can invalidate an otherwise strong approach in the Red Rock game.

Build a clean research journal, then leverage it: In the Red Rock game, use consistent labels, short headings, and highlight key numbers and insights. This improves your analysis speed and visibly supports a structured process. Your notes should let you resume instantly after any decision point.

Time-box every phase, then enforce it: Once the timed section starts, you cannot pause. In the Red Rock, enter with a plan for how long you will spend on exploration, analysis, and execution. If a step is running long, cut scope, decide, and move. In the Sea Wolf, allocate an equal amount of time to each game. Candidates typically fail by over-investing early and rushing critical decisions late.

Do not “hunt” for data, target it: Explore with a purpose. Start with a hypothesis, then collect only the information required to confirm or reject it. Random clicking and exhaustive reading look thorough but usually score poorly and kills your clock.

Beat the McKinsey Solve Game

- Crack every game: Proprietary guide and video insights detailing the exact steps and strategies used by successful candidates

- Score high: Tailored tactics and gameplay walkthroughs based on real test-taker feedback

- Prepare efficiently: Focus on what matters most and avoid wasted preparation time with proven methods to master all required skills

- Interview-ready bonus: Includes a free 14-page McKinsey Interview Primer with essential guidance for case and PEI preparation

*Based on customer feedback from January to March 2026

Latest update: April 2026

Our Credentials

- 9,000+ candidates supported from more than 70 countries since November 2019

- 600+ test-taker interviews informing continuous refinement, combined with expert game designer input and firsthand McKinsey experience

- Complete preparation suite including fully playable game simulations, a 142-page strategy guide, automated Excel tools for Ecosystem Creation, and a video course covering gameplay and winning strategies

- 100% proprietary content

Click on the image below to learn more about our Solve Game Guide and Simulation.

McKinsey Solve Game Guide 24th Edition

SALE: $169 / $99

15 Responses

Hello, thank you for this introduction. I would like to ask about one thing. In the ecosystem… From all 8 species – they have to survive? Or they can be eaten by predators? I understand how to create the food chain, but still…if you create a food chain and the species do not replicate, they will be eaten by predators…

Dear Lenka,

All species in the food chain (animals and plants) need to survive. The sum of the calories provided by a species – the sum of calories needed for the predator species should always be positive.

Cheers,

Florian

hi Florian,

I only have 3 hours before the PSG is due, is it possible or useful to buy the guide given such a short time limit?

Thank you

Dear Angelina,

3 hours would be enough to read through the strategy section, watch the videos and familiarize yourself with the Excel. While not ideal and we receommend more time to practice, it would still make sense.

Cheers,

Florian

[…] using digital badges to recognise learning and, for example, the consultant company McKinsey uses a game during its recruitment process,” adds Nikoletta-Zampeta […]

Hello Florian Daniel or Colleague,

I am very pleasantly surprised to see this guide that you have masterfully complied. Having tips from insiders is such a confidence boost! I purchased this pack without hesitation and am hoping to try it out before investing in the comprehensive 6h coaching program.

Nonetheless, I wonder if you can email me back by helping me with downloading the actual guide? I encountered a technical issue whereby I completed my payment on my phone, but it became impossible to download it via my laptop. I am very worried as the deadline of the test is approaching so could you please get back to me asap?

Many Thanks

Aspiring Consultant

Hey there,

I have just sent you your documents, which also contain access to the video program.

Please let me know if I can assist further.

Kind regards,

Florian

Hi, how long would you suggest I prepare for the McKinsey digital assessment test after purchasing the digital assessment guide? 2 weeks? 4 weeks?

Hi Emmanuel,

We have candidates that prepare between 2 days and 1 month. The shorter your preparation time, the more your focus should be on learning the proven strategies we outline in our guide (so that you can implement them properly on the game day) and go through and practice the most effective and important tools we provide you with to quickly raise your skill levels.

Obviously, when you have more time on your hands, you can prepare in a much more relaxed way and go deeper with all our exercises and tools. Generally, I would say that 2 weeks is the sweet spot we have seen with our candidates and it is rare for them to fail after they have gone through all exercises and tools, practiced the preparation tips, and have our game-plan and strategies internalized over this time period.

4 weeks would give you enough time to prepare without a rush, and in parallel to the case interview practice. In any case, should something change in the game between your purchase and the testing date, we will send you a new version of the guide and the videos free of charge!

Let me know if you have any further questions!

All the best for your preparation and your application.

Florian

I heard that there are also other games that could be part of the PSG like predicting and preventing an environmental disaster. Are you sure that there are ‘only’ the 2 two games you describe?

Hi Luiz, we talk briefly about these potential other scenarios in our Problem Solving Game Guide. Be aware that they were used during the trial stages in 2018/19 only and none of our more than 700 customers has reported on them pro-actively. From the 80+ customers we interviewed since November 2019, all went solely through the ecosystem game and the tower defense-like game. In the ecosystem game, recent candidates report having done the mountain ridge scenario and not the reef (even though this has no impact on the actual gameplay).

Hi, how do I know if I passed the ecosystem simulation task?

Hi Patricia, on an aggregate level the game looks at both your product score (did you produce a good outcome?) and your process score (did you perform well under stress while working towards the outcome?).

In order to pass the ecosystem simulation, ideally, you reach the threshold McKinsey set for both scores (which is unknown). For the product score, you should be able to test your hypotheses during the game and see if your food chain is actually sustainable and works out. However, for the process score, you can only take a guess. McKinsey and Imbellus record every movement of your mouse, every click, as well as how long you pause, go back and forth in the menus, etc. In short, the more you have worked in a calm and collected manner towards selecting your food chain, the higher the chances to reach a solid process score.

Hi, I have one question, Is McKinsey problem-solving game material included in Mc Kinsey program?

Hi Federico, do you mean the Video Academy or the Interview coaching?